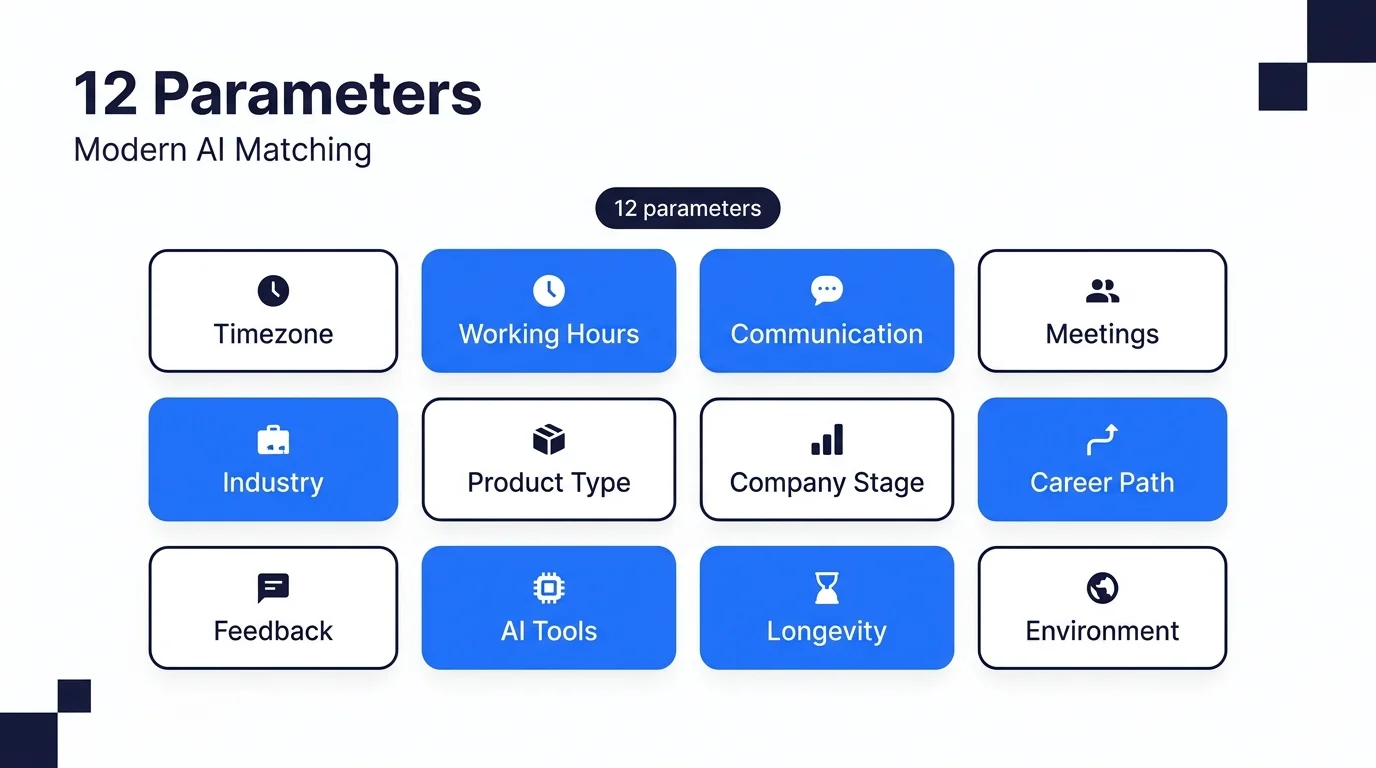

Twelve parameters predict developer hiring success beyond raw technical skills. They cover working rhythm, context depth, career alignment, and environment. Modern AI matching tools should measure all of them. Most still measure two or three at best, and that is why so many good hires still turn into disappointing outcomes.

This post walks through all twelve parameters, what each one measures, why it matters in practice, and how to audit a vendor to check whether they actually measure it. If you are shortlisting AI hiring tools, this is the list to bring to every vendor call.

Why 12 parameters and not 3 or 30?

Twelve is the number that covers meaningful hiring risk without collapsing into noise. Three parameters (the typical Gen 1 approach of skills, experience, location) leaves too many known failure modes untouched. Thirty parameters sounds thorough in a vendor pitch but most of those extra signals are either redundant, unverifiable, or weakly correlated with outcomes.

The twelve in this list were chosen for three reasons. First, each one maps to a real, documented failure mode we have seen in placements that looked right on paper. Second, each can be measured cleanly through disclosed candidate inputs rather than scraped data. Third, together they explain most of the variance in whether a hire survives eighteen months. Tools that measure fewer parameters make shortlists that look fine and fall apart when people actually start working together.

Working rhythm: timezone, hours, communication, and meetings

The four parameters in this group predict whether a candidate can slot into your team's daily rhythm. They are the most common reason a technically qualified hire still fails.

1. Timezone overlap. Not the label "India" or "EST", but the actual count of hours per day the candidate genuinely works while your team is also working. An engineer on a 10am to 7pm IST schedule has almost zero overlap with US Pacific. Different engineer on an 11am to 8pm IST schedule has three hours of useful US Pacific overlap. Label is the same, reality is different. Ask vendors how they measure this. If they answer with a location code instead of an hours number, they are not measuring it.

2. Preferred working hours pattern. Some engineers thrive with four or more hours of synchronous collaboration. Others are async-first and lose productivity in too many real-time calls. This is separate from timezone and it matters for teams that run on either extreme. Ask: what fraction of the candidate's previous roles were synchronous-heavy?

3. Communication style. Short Slack messages or long documentation. Video or text. Emoji fluent or formal. These read as trivial but they predict integration friction on day one. A team that lives in Loom recordings and a candidate whose default is a two-paragraph email will feel mismatched within a week. Vendors should be measuring this from writing samples and past work style, not inferring it from tenure.

4. Meeting cadence tolerance. Some engineers treat daily standups as grounding. Others lose a full productive morning to every meeting on the calendar. Both are valid working styles. The mismatch is what kills productivity. Ask vendors whether they capture the candidate's stated tolerance for meetings per week. If not, they are guessing.

Context depth: industry, product type, and company stage

Three parameters that determine how fast a candidate ramps up and whether they ship with instincts or with guesses.

5. Domain and industry context. An engineer who spent three years on payment rails is a different hire from one who spent three years on logistics dashboards. Both might write identical Python. Only one understands idempotency under network partitions, reconciliation edge cases, and PCI compliance. Vendors should be scoring this by depth and recency, not keyword-matching past job titles.

6. Product-type familiarity. B2B SaaS, consumer mobile, internal enterprise tools, AI-first products: each has different patterns for caching, auth, telemetry, and release cadence. A candidate with shipped experience in your product type reduces ramp-up from months to weeks. This is one of the strongest single predictors of productivity in the first quarter.

7. Company-stage comfort. A senior engineer coming from a 5000-person enterprise will often struggle for six months at a 15-person startup. Not because of skill but because the rhythm, ambiguity tolerance, and ownership expectations are completely different. Stage fit is underrated and rarely measured. Vendors that skip this will happily place a Google ex into your six-person team and wonder why it falls apart.

Career and people: trajectory, feedback, AI tools, longevity

Four parameters that determine whether the match survives beyond the honeymoon period.

8. Career trajectory alignment. Is the candidate looking for ownership or for execution? Growth into leadership or deep individual contributor work? Hiring someone whose trajectory fights the role you are offering is the most common retention failure we see. A senior IC who is secretly hoping to lead a team will quietly check out once it becomes clear your role has no path to management.

9. Feedback style. Direct and blunt, or warm and contextual. Formal written reviews or ad-hoc chat. Neither is objectively better. The mismatch is what creates quiet friction that looks like a technical problem but is actually a communication one. This is probably the hardest of the twelve to measure cleanly, which is why most vendors skip it.

10. AI-tool comfort. Does the candidate work confidently with Cursor, Copilot, Claude Code, or v0? Or do they prefer hand-crafted code? Both are legitimate choices. Forcing a hand-crafted engineer into a vibe-coding team or vice versa creates friction that compounds. This parameter has become critical since 2024 and most Gen 1 tools still do not ask.

11. Longevity signals. Job-hopping patterns, stated reasons for leaving prior roles, career timeline coherence. Not used to discriminate, used to predict whether the match will still be intact in eighteen months. Candidates who have left every role inside twelve months for similar reasons will probably do it again. Candidates who stayed five years in one role but have a clear explanation for the next move are much safer bets.

Environment: the parameter that everyone forgets

One more parameter, often the difference between a placement that works and one that quietly falls apart.

12. Working environment stability. Home office setup, internet reliability, personal schedule predictability, childcare commitments, and so on. Practical factors that determine whether a candidate can actually show up reliably week after week. Vendors almost never ask about this because it feels invasive. Handled well, it is not invasive. It is the candidate proactively stating what working arrangement lets them do their best work. Without this signal, every other parameter becomes less reliable because the candidate might simply not be available to use their skills.

How do you audit whether a vendor actually measures these?

Vendor pitches are full of words like "context" and "behavioral matching" without any underlying measurement. When you are evaluating an AI matching tool, take this list into the call and ask one question for each parameter: which exact input captures this signal, and how is it stored?

Honest answers sound like: "We ask the candidate in intake step two, it is a structured field, we surface it on the shortlist view." Weak answers sound like: "Our AI picks this up holistically from the profile." The second answer is marketing-speak for "we do not measure this."

A vendor should be able to tell you the data source for every parameter. If they cannot, they are not differentiating on depth. They are hoping you will not look closely. Our full comparison of the 11 AI developer matching tools in 2026 applies this audit to every tool in the category.

Which parameters matter most for different hiring scenarios?

All twelve parameters matter in principle. In practice, weighting shifts with the role. The table below is a rough guide for how heavily to weight each group depending on what you are hiring for.

| Scenario | Working rhythm | Context depth | Career and people | Environment |

|---|---|---|---|---|

| MVP builder (4-12 weeks) | High | Low | Low | Medium |

| Senior enterprise hire | Medium | High | High | High |

| Short-term specialist project | High | High (skills focus) | Low | Low |

| Long-term remote team member | High | Medium | High | High |

| AI-first startup engineer | Medium | Medium | High (including AI-tool comfort) | Medium |

If your hiring mix looks different from any of these rows, build your own weight profile before the vendor calls. The three parameters most predictive of eighteen-month retention across our placements have been career trajectory alignment, domain context, and communication style. That data is not universal but it is a reasonable starting point until you have your own.

When are these twelve parameters the wrong filter?

Honest tradeoffs matter. The twelve parameters are not always right.

Entry-level high-volume hiring. When you are screening thousands of junior candidates for a training program, lifestyle-fit parameters become secondary. The role itself does most of the filtering. A skills-first Gen 1 tool is more efficient at this volume.

Two-week contract engagements. Retention signals are irrelevant if the engagement ends before any retention signal could matter. Just hire on skill and rate.

Roles defined entirely by credentials. Some regulated roles (clearance-required, specific certification-gated) weight credentials so heavily that lifestyle parameters are essentially tiebreakers. Still worth measuring, but do not overweight them.

Teams that hire via rigorous multi-round interview. If your existing process extracts most of these signals through a six-stage interview, an AI tool that surfaces them earlier is an optimization, not a transformation. Still valuable but not urgent.

What to do with this list

Bring the twelve parameters into your next AI vendor call. Ask each one the audit question: which input captures this, and how is it stored. The ones who can answer clearly for ten or more parameters are the Gen 2 tools worth your time. The ones who cannot answer for more than three or four are Gen 1 tools wrapping thin lifestyle claims around skills matching.

If you want to see what a shortlist built from all twelve parameters looks like for your specific role, reach out to us and we will run one inside 48 hours. No fee for the shortlist, no obligation to hire.