AI developer matching is the automated process of scoring and ranking candidates against a role using structured signals instead of manual resume review. In 2026, two generations of tools do this very differently: Gen 1 matches on skills extracted from resumes, Gen 2 matches on 12 lifestyle and behavioral parameters alongside skills. Both follow a similar pipeline but disagree on what data counts as signal.

If the Gen 1 and Gen 2 terminology is new to you, start with our primer on what lifestyle-fit matching is and why skills-only AI keeps failing. That post defines the category and names the Gen 1 tools (Eightfold, SeekOut, HireEZ) and why the industry is shifting toward Gen 2. This post picks up from there and walks through the actual six-stage pipeline inside every AI matching system and where each stage breaks.

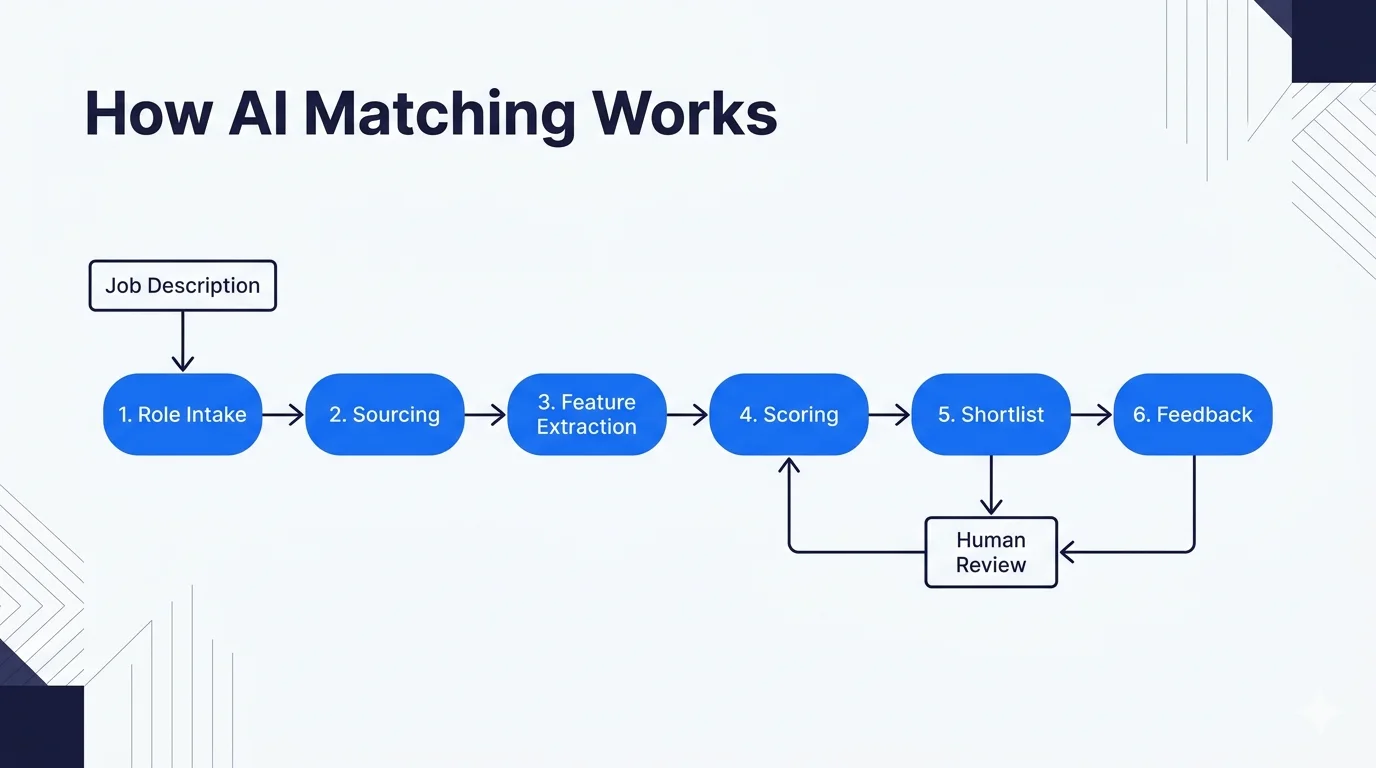

What happens when you submit a role to an AI matching tool?

Every AI developer matching system, Gen 1 or Gen 2, follows the same six-stage pipeline. The stages are the same. The inputs and scoring weights are what differ.

- Role intake. The system ingests the job description, required skills, seniority band, and compensation range. Gen 2 systems also ingest lifestyle requirements: timezone overlap, communication cadence, industry, company stage.

- Candidate pool sourcing. The tool pulls candidates from its index: a proprietary pool (marketplaces), an external index (SeekOut, HireEZ scraping public profiles), or both.

- Feature extraction. Each candidate is parsed into a feature vector: skills, years of experience, past titles, education, project history. Gen 2 systems add behavioral features: writing samples, stated preferences, communication patterns from interviews.

- Scoring. A model (historically a gradient-boosted tree, increasingly a fine-tuned LLM) scores each candidate against the role. Weights are tuned based on past placements.

- Ranking and shortlist generation. Top candidates are ranked. Gen 1 usually returns 50 to 200. Gen 2 returns 3 to 10.

- Human review and feedback loop. Recruiters or hiring managers review the shortlist, accept or reject candidates, and that signal feeds back to retrain the model.

Where does Gen 1 AI matching break?

Gen 1 tools (Eightfold, SeekOut, HireEZ) broke the manual resume-screening bottleneck. They also inherited all its failure modes and added a few new ones.

Resume-as-truth assumption. Gen 1 extracts features from resumes. Resumes are self-reported, keyword-stuffed, and optimized for applicant tracking systems. A candidate who writes "led team of 12" and one who writes "individual contributor on a team of 12" show up the same in a feature vector.

Skills without context. Two candidates both list "React, Node.js, AWS". One spent three years building payment flows for a neobank. The other spent three years building dashboards for a logistics startup. Gen 1 matches them equivalently. They are not equivalent.

No longevity signal. Gen 1 tools rarely try to predict whether a placement will last 18 months. They optimize for the match event, not the retention outcome. This is why companies keep running the same pipeline a year later: placements fail quietly and the cycle restarts.

External data scraping risk. SeekOut, HireEZ, and others enrich candidate profiles by scraping public sources. A January 2026 FCRA class action against Eightfold alleges this compilation constitutes unregistered Consumer Reporting Agency behavior. The legal exposure here is not theoretical.

How is Gen 2 matching different at each stage?

Gen 2 tools (SethAI and a few emerging others) address the Gen 1 failure modes by changing what counts as signal and how signals are collected.

| Stage | Gen 1 approach | Gen 2 approach |

|---|---|---|

| Role intake | Skills + seniority + location label | Skills + seniority + timezone hours overlap + industry + communication style + longevity target |

| Sourcing | Resume indexes, public profile scraping | Disclosed consent pool, structured interview data, writing samples |

| Feature extraction | NLP over resume text | NLP over resume + behavioral features + self-reported preferences |

| Scoring | Skill-match weight dominant | Skill match + lifestyle-fit score + longevity prediction weighted together |

| Shortlist size | 50 to 200 candidates | 3 to 10 candidates |

| Feedback signal | Did candidate get an interview | Did the hire stay and perform at 3, 6, 12 months |

The most important row is the last one. Gen 1 learns from whether candidates were interviewed. Gen 2 learns from whether the hire actually worked. These optimize for different outcomes even when the pipeline structure looks the same.

What data does an AI matching tool actually need?

Buyers often assume AI matching tools need minimal input: paste the job description and go. That is true for Gen 1 (and part of why it produces loose matches). Gen 2 needs more, and it is worth understanding what before evaluating tools.

The role definition. Title, skills, seniority, compensation, location constraints. Standard across both generations.

Your team's working context. Timezone, core collaboration hours, async tolerance, meeting cadence, communication style. Gen 2 cannot match well without this. Gen 1 does not ask.

Industry and product context. What your product does, who the users are, what past experience a candidate needs to ramp up in weeks instead of months. This is the biggest single differentiator in match quality.

Longevity target. Is this a 3-month contract or a 2-year hire? Gen 2 weights candidates differently based on the time horizon.

Constraints. Compliance requirements (FCRA, GDPR, region-specific), must-have or must-avoid tool experience, language requirements.

At SethAI we collect this upfront in a 20-minute intake call before generating any shortlist. Gen 1 tools skip this step, which is both a feature (faster) and a failure mode (matches that look right on paper and fail in practice).

How accurate is AI developer matching?

Accuracy is the most slippery number in the industry. Vendors quote 80% or 90% match accuracy, but the definition of "accurate" varies wildly.

Definition 1: Match score correlation with interview conversion. Did candidates who scored highly actually get interviewed more often? Useful but low bar. A Gen 1 system can easily hit 85% on this because it is essentially measuring whether its model predicts recruiter behavior.

Definition 2: Shortlist precision. Of the candidates shortlisted, how many were actually qualified for the role on closer review? Better metric. Gen 1 tools typically hit 40 to 60 percent. Gen 2 tools can hit 80 percent or higher because the shortlist is narrower.

Definition 3: Retention prediction accuracy. Did the hired candidate stay for the expected term? This is the metric that matters economically, and the only one that distinguishes Gen 1 from Gen 2 honestly. Gen 1 is rarely measured on this. Gen 2 is explicitly optimized for it.

When a vendor quotes an accuracy number, ask which definition they are using. The answer is diagnostic. If they cannot articulate it clearly, they are probably quoting Definition 1 and hoping you will not notice.

Can AI matching tools replace recruiters?

The short answer is no, and any vendor claiming otherwise is selling something. AI matching tools are best treated as a leverage layer on top of human recruiters, not a replacement.

What AI does well: process hundreds of profiles quickly, apply consistent scoring, reduce the "who did we miss" risk, flag subtle signals a human misses under volume.

What humans do better: interpret ambiguous signals, make judgment calls when data is thin, have trust-building conversations, spot cultural red flags, negotiate compensation honestly.

The combination wins. A human recruiter working with a Gen 2 matching tool will outperform either one alone. This is consistent with our findings and with public data from recruiting teams using AI matching in 2025 and 2026.

What does a real AI matching workflow look like at Workforce Next?

To make this concrete, here is the actual workflow SethAI runs when a customer submits a role. Timing comes from median engagements.

Hour 0: 20-minute intake call. We capture role, team context, industry, lifestyle requirements, longevity target, and any constraints.

Hours 0 to 4: SethAI generates a ranked shortlist of 3 to 5 candidates from the consent pool. A human recruiter reviews and rejects any that feel off.

Hour 48: Shortlist delivered to the customer with a match summary for each candidate explaining why they ranked highly on which parameters.

Days 3 to 7: Customer interviews the shortlist. We do not pre-interview to keep the experience direct between customer and candidate.

Week 2: Paid trial week begins with the chosen candidate. If it does not work out, we rematch without a charge.

Month 3, 6, 12: Retention check-ins feed back into the model. Every successful placement teaches SethAI what a good match looks like for similar future roles.

If you want to see this workflow in action for your specific role, reach out and we will run it within 48 hours.

Which generation of AI matching should you use?

Pick based on the hiring problem you actually have, not on how the tool markets itself.

Use Gen 1 (Eightfold, SeekOut, HireEZ) if: you source high volumes of candidates per quarter, you have dedicated TA operations to filter noisy shortlists, your hiring decisions are based more on resume patterns than on fit, and you accept the FCRA-adjacent legal exposure.

Use Gen 2 (SethAI and similar) if: a single bad hire is expensive for you, you care about 12-to-18-month retention more than pipeline size, your team has specific lifestyle or industry requirements, and you want a smaller shortlist that is more likely to convert.

Use both if: you run enterprise-scale sourcing but want a fit-focused final pass. Gen 1 for discovery, Gen 2 for ranking. This pattern is becoming common in companies hiring 50+ engineers a year.

For a full side-by-side of the 11 tools in this category, read our honestly ranked list of the 11 best AI developer matching tools in 2026. For the category definition behind Gen 2, see what lifestyle-fit matching is and why skills-only AI keeps failing.