The "hire a software testing team" search query is a clearer signal than "hire a software tester." Buyers who type the team variant know they have a multi-person problem: a release pipeline with parallel automation work, a regression surface that grew past what one person can run, or a 24/7 product that needs continuous coverage. This post is the honest 2026 buyer guide for that decision: when a team beats a single tester, what shape the team should take, and how to budget for it.

Read alongside our QA engineers and testers page for the production-shape view of how we staff testing pods.

When does a software testing team beat hiring a single tester?

The honest answer: when any of these are true, and especially when more than one is true:

- The product has more than one release surface. Web plus iOS plus Android, or web plus an internal admin app, or a public API plus a customer-facing UI. One tester cannot own all of these without dropping balls.

- The release cadence is faster than one person can sustain. Weekly releases, multiple environments, and a regression suite that takes more than two hours to run start to break a single tester's calendar.

- You need automation and exploratory coverage in parallel. SDET work (writing automation suites) and manual exploratory work (catching the bugs the suite cannot) are different skill sets and different time profiles. One person doing both does both badly.

- You have on-call or release-window coverage needs. 24/7 production coverage or staged-rollout monitoring requires more than one human in the loop.

- Compliance or audit requires documented test ownership across roles. SOC 2, HIPAA, and similar audits ask "who owns testing for this surface" and a single-tester answer often does not pass review.

If none of these are true, hire a single senior tester instead. The team format is overhead until the surface justifies it.

What shape should the testing team actually take?

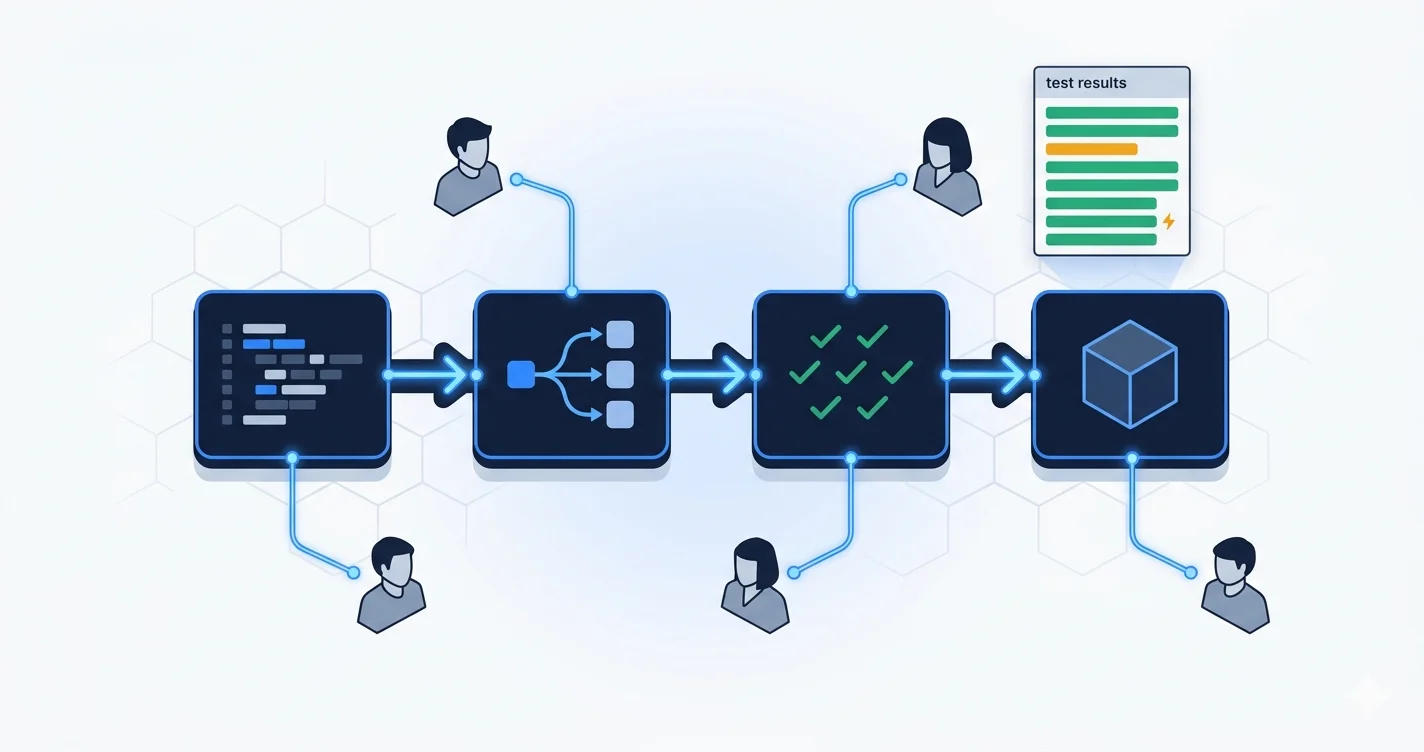

The 2026 default pod for a multi-platform product with a steady release cadence:

- 1 SDET lead. Owns the test architecture, the CI integration, the framework choice, and the long-term direction of automation. Senior engineer, ideally with shipped Playwright, Cypress, or equivalent at production scale.

- 2 to 3 SDETs. Write and maintain automation suites across web, mobile, and API surfaces. Each owns one or two surfaces day to day.

- 1 manual or exploratory tester. Runs the bug-hunting, edge-case, and accessibility coverage that automation cannot match. Often pairs with the SDETs to convert recurring manual cases into automation.

Smaller pods (3 people total) collapse the manual role into the SDET workload and lose some exploratory coverage but ship cheaper. Larger pods (6+) start to need an internal QA manager, which is a different shape of hire and a different cost line.

What does an SDET actually do that a manual tester does not?

SDET stands for Software Development Engineer in Test. The term has been overused, so the honest definition: an SDET is an engineer who writes code that tests code. They are not running test cases by hand; they are writing and maintaining the automation suites, the CI integration, the test data fixtures, and the infrastructure that catches regressions before a human ever sees them.

Concrete responsibilities:

- Authoring and maintaining E2E suites in Playwright (web), Detox or XCUITest (mobile), or k6 (load and performance).

- Writing and reviewing API contract tests, often using tooling like Pact or Postman Newman in CI.

- Building and tuning the CI pipeline so tests fail fast, parallelize properly, and produce useful failure artifacts (screenshots, traces, console logs).

- Maintaining test data and environment management so tests are deterministic across runs.

- Triaging flaky tests aggressively and treating flake as a real bug, not a tolerated nuisance.

A manual or exploratory tester, by contrast, is a domain expert who finds the bugs automation cannot. They run sessions targeted at specific risk areas, pair with engineers on edge cases, and validate user-facing flows that automated suites cannot judge as cleanly. In 2026, the manual tester role is smaller than it was a decade ago but still essential for products where user experience nuance matters.

How much does a software testing team cost in 2026?

Realistic pricing for an India-based testing pod, all-in to the client:

- 3-person pod (1 lead + 2 SDETs). USD 14,000 to 22,000 per month. Annualized: USD 168,000 to 264,000.

- 4-person pod (1 lead + 2 SDETs + 1 manual). USD 18,000 to 28,000 per month. Annualized: USD 216,000 to 336,000.

- 5-person pod (1 lead + 3 SDETs + 1 manual). USD 22,000 to 34,000 per month.

For comparison, a single fully loaded US senior tester or SDET costs USD 170,000 to 240,000 per year. A 3-person India pod is roughly the same annual cost as one US senior, with three times the coverage and parallel work across surfaces. The math is the primary driver behind the offshore testing-pod model. The full senior India developer pricing is in our 2026 senior Indian developer salary post.

What engagement model fits which company stage?

Three patterns we see across active engagements:

- Fractional pod (20 to 30 hours per week, per role). Best for early-stage teams where the surface area is growing but not yet at full-time pod scale. Typically a lead plus one SDET, both fractional.

- Full-time dedicated pod. Best for funded startups and mid-market companies with continuous release pressure. The pod is embedded, attends standups, and owns the test pipeline end to end.

- Audit-and-build engagement (8 to 12 weeks). Best for companies inheriting a messy test stack or preparing for an audit. The pod runs a deep audit, ships the highest-impact automation in the first 6 weeks, then either rolls into ongoing dedicated work or hands off to an in-house team.

The audit-and-build model is the most common entry shape for first-time customers. It de-risks the relationship for both sides and surfaces the actual long-term shape of the testing problem.

How do you screen for a real SDET vs a junior automation engineer?

Six screening signals that separate SDETs who ship from candidates who have written tutorial Playwright:

- Flake awareness. Ask candidates to walk through a flaky test they hit and fixed. Strong candidates explain the root cause (timing, hidden state, network nondeterminism) and the structural fix. Weak ones added a retry and called it done.

- CI design. Show candidates a slow CI pipeline and ask how they would speed it up without adding cost. Strong answers reach for caching, parallelism, and selective execution. Weak ones suggest bigger runners.

- Test data discipline. Ask how they handle test data setup and teardown. Strong candidates have a fixture strategy. Weak ones use production seed data and hope.

- Contract testing fluency. Ask whether they have shipped Pact or equivalent in production. Strong candidates explain the consumer-driven flow; weak ones confuse it with API smoke tests.

- Review of a real PR. Show them a non-trivial pull request and ask what they would test. Strong candidates identify the risk axes (state transitions, error paths, concurrency). Weak ones write a happy-path test only.

- Manual collaboration. Ask how they work with the manual tester on the team. Strong candidates describe a feedback loop where manual sessions feed automation backlog. Weak ones treat the two roles as separate.

For our own placements, every SDET is screened by SethAI on these six signals plus the longevity layer that determines whether the engineer stays past the first three months. The full hiring breakdown is on our QA engineers and testers page.

What testing stack does the modern pod ship in 2026?

The honest 2026 production stack across active testing pods:

- Web E2E: Playwright is the default. Cypress is still common in older codebases.

- Mobile E2E: Detox or Maestro for cross-platform; XCUITest and Espresso for native-only platforms.

- API and contract testing: Postman or Newman for smoke testing; Pact for consumer-driven contract tests where the team is large enough to warrant it.

- Performance and load: k6 for HTTP load tests; Gatling for higher-volume scenarios.

- Visual regression: Percy, Chromatic, or Argos depending on stack and budget.

- CI: GitHub Actions or GitLab CI, with strict parallelism and aggressive caching.

- Reporting: Allure, Currents, or built-in CI reports; the goal is failure artifacts that engineers can act on without running the test locally.

The single biggest 2026 trend in testing pods is the move toward AI-assisted test generation: tools like Playwright's codegen, GitHub Copilot for tests, and dedicated AI test-generation services. We use these as productivity multipliers, not replacements for engineering judgment. AI generates the first draft; the SDET reviews, refactors, and accepts.

How do you migrate from outsourced individual testers to a full pod?

If you currently rely on individual offshore testers and want to upgrade to a coordinated pod, the migration that works:

- Audit the current test stack. What is automated, what is manual, what coverage gap is the most expensive? Audit takes 1 to 2 weeks.

- Hire the SDET lead first. The lead sets the direction. Hiring the rest of the pod before the lead means buying coordination work for yourself.

- Phase the pod expansion over 4 to 8 weeks. Lead plus one SDET in week 1, second SDET in week 4, manual tester in week 6 to 8. Each addition gets onboarded properly rather than dropped into chaos.

- Migrate ownership of existing automation gradually. Existing testers either join the new pod or transition out. Both can work; the choice depends on each individual's career direction.

The full migration from "individual testers" to "coordinated pod" usually completes in 8 to 12 weeks and removes more coordination overhead than any of the testers had visible time for previously.

Final word

The reason "hire a software testing team" is a sharper search query than "hire a tester" is that it correctly names the actual buyer problem. One tester cannot own a multi-platform release pipeline with weekly cadence and an audit looming. A 3-to-5-person pod can. The math is favorable, the engagement shapes are well-understood, and the bench exists.

If you are about to hire a testing team and want a pod matched in 48 hours, talk to us. We will scope the surface area, recommend the right pod size, and start a paid trial with the lead before you commit to the full pod.