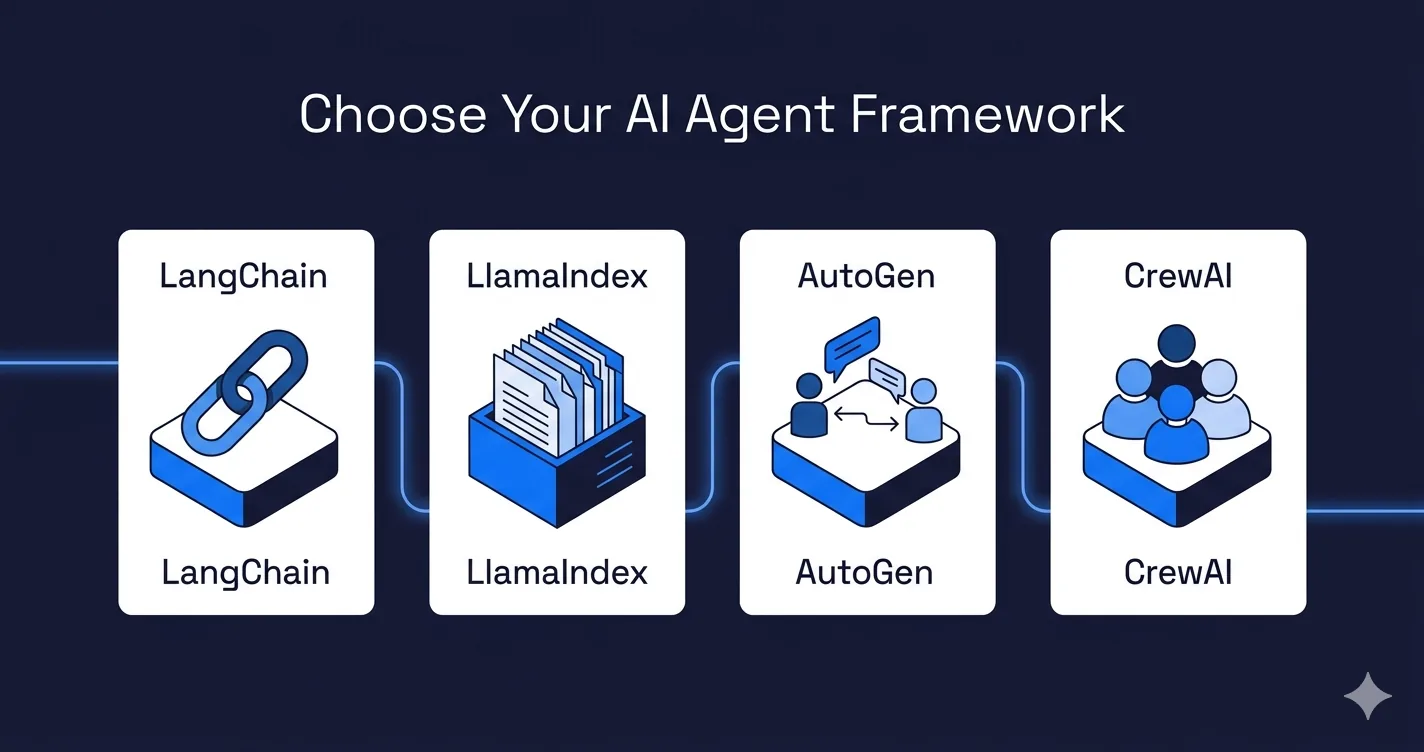

Picking an AI agent framework is one of those decisions that looks small on a tooling survey and then quietly shapes six months of engineering effort. LangChain, LlamaIndex, AutoGen, and CrewAI all claim to do similar things. They do not. Pick the wrong one for your use case and you spend a quarter fighting the framework instead of shipping the feature.

This post walks through what each framework is actually good at, when to pick which, and what to verify before committing. If you are about to hire engineers around a framework choice, read this first so you do not hire for the wrong stack.

What does an AI agent framework actually do?

An agent framework wraps three things: calling an LLM, letting the LLM call tools (search, APIs, databases), and orchestrating multi-step workflows where the output of one step feeds the next. The frameworks differ in how much opinion they impose on each layer, and how much they help with the parts that are genuinely hard: retries, state, memory, and multi-agent coordination.

If your use case is a single LLM call with no tools, you do not need a framework. The OpenAI or Anthropic SDK directly is enough. Frameworks start earning their weight when you have tool calls, retrieval, or multiple reasoning steps.

When should you use LangChain (or LangGraph)?

LangChain is the broadest option. It ships adapters for essentially every model provider, vector database, and document loader you can name. If you need to prototype something quickly across a heterogeneous stack, LangChain gets you from zero to working in an afternoon.

The honest downside is that LangChain's abstractions have churned hard since 2023. Production teams have complained about breaking changes between minor versions, and the core project has split into LangChain (the toolkit), LangGraph (the agent state machine), and LangSmith (observability). For anything beyond a linear chain, LangGraph is the part you actually want. LangGraph models agent workflows as explicit graphs with state, which is a much better fit for production than the older AgentExecutor pattern.

Pick LangChain (or LangGraph) when you want the largest ecosystem, do not want to write integration code for niche tools, and have engineers willing to version-pin aggressively. Our LangChain developers spend more time on LangGraph than on classic LangChain in 2026 production work.

When is LlamaIndex the better fit?

LlamaIndex started as a retrieval library and is still the clearest choice when retrieval is the core problem. Document ingestion, chunking strategies, hybrid search, and query rewriting are more polished in LlamaIndex than in LangChain. If you are building a knowledge assistant over your own documents, or an enterprise search layer, LlamaIndex will get you further with less plumbing.

LlamaIndex has grown into full agent workflows under its Workflows API, but if retrieval is not your hottest loop, you are probably fighting the framework. Teams that try to build complex multi-agent systems in LlamaIndex often end up wishing they had used LangGraph or AutoGen.

Pick LlamaIndex when retrieval quality over your documents is the thing that will make or break the product. See our post on RAG vs fine-tuning for deciding whether retrieval is actually your bottleneck.

When does AutoGen win?

AutoGen, from Microsoft Research, is opinionated about one thing: multiple agents talking to each other. If your use case genuinely needs a coder agent, a reviewer agent, and a user-proxy agent collaborating in a loop, AutoGen handles that pattern natively. The v0.4 rewrite made it event-driven and more production-friendly, though the community is still smaller than LangChain's.

The trap is reaching for AutoGen just because "multi-agent" sounds exciting. Most problems that look like multi-agent problems are actually single-agent-with-tools problems. Writing three agents to do what one well-prompted agent with three tools could do is a common way to burn months of engineering for no measurable quality gain.

Pick AutoGen when you have a clear reason multiple agents with distinct roles beats a single agent with tools, and when you have the observability budget to debug agent conversations going off the rails.

Where does CrewAI actually shine?

CrewAI is the newest of the four and the easiest to describe: it models agent teams as "crews" with named roles, goals, and tasks. For content pipelines, research assistants, and any workflow that maps cleanly to "here is a team of specialists, each with a job," CrewAI reads almost like pseudo-code.

It is lighter-weight than AutoGen and opinionated about structure in a way that reduces decision fatigue. The tradeoff is less flexibility. If your workflow does not fit the crew metaphor, CrewAI will feel restrictive fast.

Pick CrewAI when your team is newer to agent systems, your workflows map to named roles, and you want to ship quickly without fighting framework internals. Our AI developers reach for CrewAI on content-generation and research-automation projects, and for LangGraph on anything with heavy state. For the broader stack around whichever framework you pick, see our AI MVP tech stack guide.

How do these frameworks compare on the criteria that matter?

The checklist most teams actually use:

| Criterion | LangChain / LangGraph | LlamaIndex | AutoGen | CrewAI |

|---|---|---|---|---|

| Ecosystem breadth | Widest | Narrower (retrieval-focused) | Narrower | Narrowest |

| Retrieval quality | OK | Best of the four | OK via plugins | OK via tools |

| Multi-agent support | Good (LangGraph) | OK (Workflows API) | Best of the four | Good (crews) |

| Learning curve | Steep | Medium | Steep | Low |

| Production maturity | High | High | Medium | Medium |

| API stability | Historically churny | Stable | Rewritten recently | Still evolving |

"Best of the four" means best of this set, not solved problem. You will still write integration code and debug edge cases regardless of which one you pick.

Which framework fits which team and use case?

Rough buckets we use when consulting:

Small team, one product, RAG-heavy. Start with LlamaIndex. Its retrieval defaults are sane and you will spend less time on chunking and query rewriting than in LangChain.

Small team, broad tool integration, heterogeneous stack. LangChain with LangGraph for orchestration. You get every integration under the sun at the cost of keeping up with version churn.

Content pipelines, research assistants, simple agent teams. CrewAI. You will ship in weeks, not quarters. Move to something more flexible later if you outgrow it.

Research-grade multi-agent work where agents genuinely need to argue with each other. AutoGen. Budget for observability from day one.

Production systems where every cent counts at scale. Build on LangGraph or a thin custom layer over the model SDK directly. Frameworks add convenience but also add latency and token overhead that can hurt at scale.

What if you need none of them?

A small but growing number of production AI teams have quietly abandoned frameworks and write against the OpenAI and Anthropic SDKs directly. The reasoning is simple. Tool calling is native in the model APIs now. State is handled by your own database. Observability comes from OpenTelemetry or a dedicated vendor. The framework's job of "wrapping the model API" shrinks every quarter as the APIs get more capable.

This path is longer upfront but cleaner in the long run. You own every line of code, nothing breaks when a framework ships a new version, and your agents are exactly as simple or complex as your problem actually requires. Pick this route when your team has enough AI engineering experience to recognize what a framework was solving and confirm you do not have that problem. For the non-AI parts of your workflow (service orchestration, scheduled jobs, SaaS integrations), see our 2026 comparison of n8n, Power Automate, Step Functions, Camunda, and Zapier to pick the right tool for that layer.

What should you check before committing?

Four things to verify before writing code around any agent framework:

1. Version history for the last 12 months. Count breaking changes on GitHub. If there are more than a handful in minor versions, expect maintenance cost.

2. Who maintains it and how. A single-company backer with commercial incentives has different risk than a broad open-source community. Both can work. Both can stall. Check commit frequency from at least three distinct contributors.

3. Observability story. Can you see every LLM call, tool call, and agent message in production without writing custom instrumentation? If not, plan that work now.

4. Escape hatch. How hard is it to drop to the raw model SDK for a specific step? Frameworks that fight you on this become painful fast. Good frameworks let you go low-level when you need to.

And one more: hire engineers who have shipped the framework before, not ones who only read the docs. The difference between "I built a demo" and "I ran this in production for six months" is six months of painful lessons. If you are staffing an AI project and want engineers who have already paid that tuition, our AI developers and LangChain specialists can help. For the role-shape question (prompt engineer vs AI developer vs platform lead), see our guide on whether you still need a prompt engineer in 2026, and our AI developer interview questions to screen for real framework experience.

The shortest version

LangChain and LangGraph for broad ecosystem and production-grade orchestration. LlamaIndex when retrieval is the hardest problem. AutoGen for genuine multi-agent research loops. CrewAI for simple named-role workflows. None of them, if your team has the chops and values stability over speed. Whatever you pick, plan for the framework to matter less over time as the underlying model APIs swallow more of its job.